AI risk assessment is the structured process of identifying, analyzing, and prioritizing the threats that AI systems pose to an organization, its customers, and wider society. For US companies, this is no longer a theoretical exercise. The NIST AI Risk Management Framework establishes a voluntary but influential standard. The EU AI Act mandates documented risk assessments for high-risk AI systems used in or affecting the European market. State-level legislation in Colorado, Illinois, and New York is adding domestic requirements. Getting AI risk assessment right is the difference between responsible deployment and regulatory exposure.

Why AI Risks Require a Different Assessment Approach

Traditional IT risk assessments focus on system availability, data confidentiality, and infrastructure integrity. AI systems introduce a layer of complexity that existing frameworks were not built to handle.

Outputs are probabilistic, not deterministic. A conventional software application produces the same output for the same input every time. An AI model generates predictions based on statistical patterns, which means two identical inputs can produce different results depending on the model’s training data, version, and operational conditions. This makes failure modes harder to predict and test.

Bias is a technical and legal risk simultaneously. An AI system used for hiring, lending, or insurance can produce outcomes that disproportionately affect protected groups, even when the system was not explicitly programmed to discriminate. The Equal Employment Opportunity Commission has made clear that employers bear responsibility for AI-driven disparate impact, regardless of whether the tool was developed in-house or purchased from a vendor.

Models degrade over time. Data drift, concept drift, and distribution shift mean that a model performing well at deployment may produce unreliable results six months later as the underlying data patterns change. Unlike traditional software, which remains stable until modified, AI systems can silently lose accuracy without any code changes.

The attack surface is different. AI systems are vulnerable to adversarial attacks, prompt injection, data poisoning, model extraction, and membership inference attacks. These threat vectors have no equivalent in traditional software security and require specialized assessment methods.

Frameworks That Structure the Assessment

Two frameworks dominate the AI risk assessment landscape for US organizations. Using them in combination provides the most complete coverage.

NIST AI Risk Management Framework (AI RMF 1.0)

Released in January 2023 by the National Institute of Standards and Technology, the AI RMF is a voluntary, sector-agnostic framework organized around four core functions: Govern, Map, Measure, and Manage. NIST developed it with input from over 240 organizations spanning industry, academia, civil society, and government.

The Govern function establishes the organizational policies, roles, and risk tolerances that shape every subsequent assessment activity. Map identifies the context, purpose, and potential impacts of each AI system. Measure employs quantitative and qualitative methods to evaluate risks against trustworthiness characteristics. Manage allocates resources to treat risks through mitigation, transfer, acceptance, or avoidance.

The companion AI RMF Playbook provides suggested actions for each subcategory, and the Generative AI Profile (NIST AI 600-1) extends the framework specifically for generative AI systems.

ISO/IEC 42001:2023

ISO/IEC 42001 is the first certifiable international standard for AI Management Systems (AIMS). It follows the ISO Plan-Do-Check-Act methodology and requires organizations to conduct both AI risk assessments (Clause 8.2) and AI impact assessments (Clause 8.4) as part of a documented management system. Certification requires compliance with 38 controls organized across 9 control objectives, covering risk management, data governance, model transparency, bias mitigation, and human oversight.

Where NIST AI RMF provides voluntary guidance with no formal certification, ISO 42001 offers a certifiable standard that can be independently audited. Organizations preparing for both EU AI Act compliance and US regulatory expectations often implement ISO 42001 as their governance backbone and use NIST AI RMF to fill in operational details.

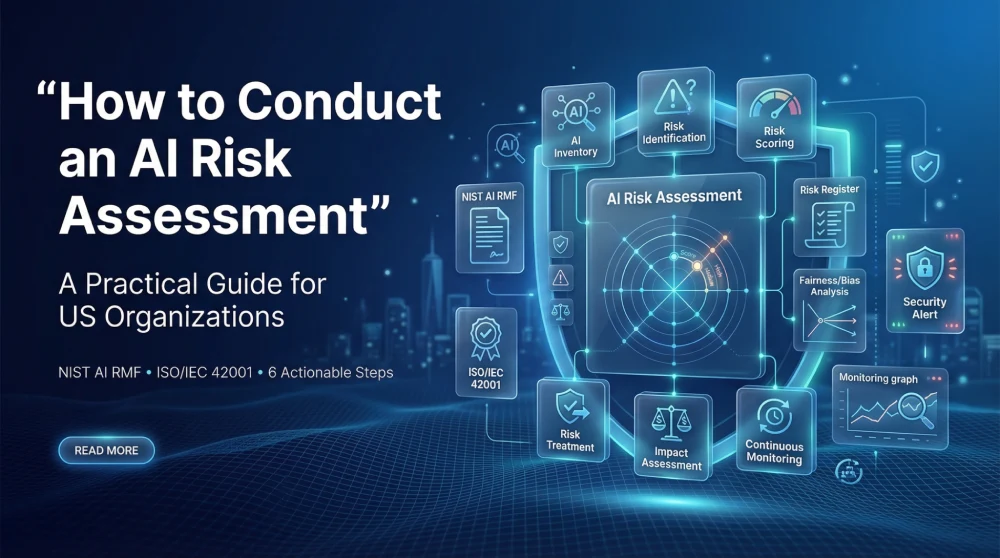

Six Steps to Conduct an AI Risk Assessment

The following process synthesizes best practices from NIST AI RMF, ISO 42001, and practical implementation experience. It is designed to work for organizations at different maturity levels, from those conducting their first assessment to those formalizing an existing program.

1. Build Your AI System Inventory

You cannot assess risks you do not know exist. The first step is cataloging every AI system across the organization, including systems built internally, purchased from vendors, embedded in SaaS platforms, and shadow AI projects running outside formal governance.

For each system, document the owner, intended purpose, data sources, deployment environment, the decisions it informs or automates, and the populations it affects. Automated discovery helps here. Scanning code repositories for machine learning library imports (TensorFlow, PyTorch, scikit-learn), tracking cloud billing for GPU compute spikes, and auditing software procurement records all surface AI systems that manual surveys miss.

Classify each system by inherent risk level. A spam filter and an AI-powered credit decisioning tool are not the same conversation. The NIST AI RMF Map function provides a structured approach to contextualizing each system, while the EU AI Act’s four-tier classification (unacceptable, high, limited, minimal risk) offers a useful regulatory overlay for systems touching the European market.

2. Identify Risks Across Five Dimensions

For each AI system in the inventory, map risks across five categories. A risk identification workshop that includes data scientists, product managers, legal counsel, compliance officers, and representatives from affected business units produces the most complete picture.

| Risk Category | What to Assess | Example |

|---|---|---|

| Bias and Fairness | Disparate impact across protected groups; training data representativeness; proxy variables | Resume screening tool scoring women lower for engineering roles because historical hiring data was male-dominated |

| Security | Adversarial attacks, prompt injection, data poisoning, model theft, supply chain risks | Attacker manipulating input images to fool a medical diagnostic model into misclassifying a tumor |

| Privacy | Training data containing PII; model memorization; re-identification risk; CCPA/GDPR compliance | Language model reproducing verbatim personal information from training data when prompted |

| Reliability | Data drift, concept drift, edge case performance, hallucination, failure mode analysis | Supply chain forecasting model degrading after a market disruption changed purchasing patterns |

| Compliance | EU AI Act obligations, state AI laws, sector-specific regulations, contractual requirements | High-risk AI system deployed in the EU without a conformity assessment or technical documentation |

Threat modeling tools adapted for AI systems, including STRIDE, DREAD, and OWASP’s Machine Learning Security Top 10, provide structured checklists for the security dimension. For bias, techniques like disparate impact ratio analysis, equalized odds testing, and demographic parity evaluation surface quantitative evidence of unfairness.

3. Score and Prioritize Each Risk

Not every risk warrants the same level of investment. After identification, assign each risk a score based on two criteria: likelihood (how probable is this risk given current controls?) and impact (if it materializes, how severe are the consequences for the organization, affected individuals, and society?).

A 5×5 likelihood-impact matrix works well for most organizations. Multiply the two scores to produce a composite risk rating, then rank all risks from highest to lowest. Group them into tiers: critical risks requiring immediate action, high risks needing treatment within a defined timeline, medium risks for planned mitigation, and low risks for monitoring.

Key insight: Some impacts, particularly those involving harm to individuals or erosion of fundamental rights, are difficult to express in dollar terms. The NIST AI RMF explicitly recommends focusing on the significance of potential harm rather than attempting to calculate precise probabilities. An AI system that could deny someone housing or employment carries a different weight than one that could misclassify a product recommendation.

4. Define and Implement Risk Treatments

For each prioritized risk, select a treatment strategy. The four standard options apply:

- Mitigate: Implement controls that reduce the likelihood or impact of the risk. This includes technical measures (fairness-aware model training, adversarial robustness testing, data quality pipelines) and organizational measures (human-in-the-loop review, escalation procedures, documentation requirements).

- Transfer: Shift the risk to another party through insurance, contractual allocation, or outsourcing to a specialized vendor. Cyber insurance policies increasingly include AI-specific riders that require documented risk assessments as coverage prerequisites.

- Avoid: Decide not to deploy the AI system or to withdraw it from a specific use case. If a risk assessment reveals that a model cannot be made sufficiently fair for a high-stakes decision, avoiding that deployment is a legitimate governance outcome.

- Accept: Formally acknowledge the residual risk and document the rationale. This should only happen when the residual risk falls within the organization’s defined risk tolerance and the decision is approved at the appropriate governance level.

Map each treatment to the specific ISO 42001 Annex A controls it satisfies. This dual mapping, connecting each risk to both a treatment action and a compliance control, creates the documentation trail that auditors and regulators expect.

5. Conduct an AI Impact Assessment

ISO 42001 Clause 8.4 requires a separate AI impact assessment for systems that pose significant potential effects on individuals, communities, or society. While the risk assessment in Step 2 focuses on organizational consequences, the impact assessment examines external effects: could this system cause discrimination? Could it undermine democratic participation? Could it create physical safety hazards?

The impact assessment should evaluate effects on fundamental rights (privacy, non-discrimination, freedom of expression), societal effects (labor market displacement, information ecosystem integrity), and environmental impact (compute-related energy consumption and carbon footprint). ISO/IEC 42005:2025 provides detailed guidance for structuring these assessments.

For US companies, the AI impact assessment also prepares you for state-level requirements. Colorado’s AI Act, effective mid-2026, requires developers and deployers to perform impact assessments for high-risk AI systems. NYC Local Law 144 already mandates bias audits for automated employment decision tools. Building a robust impact assessment process now creates a repeatable capability that adapts as regulations proliferate.

6. Monitor, Review, and Reassess Continuously

An AI risk assessment is not a one-time project. It is a recurring process that must adapt as models are retrained, use cases shift, data distributions change, and regulations evolve.

Establish continuous monitoring that tracks model performance metrics (accuracy, precision, recall, F1 score), fairness indicators (demographic parity, equalized odds), security alerts (adversarial input detection, anomalous query patterns), and operational indicators (latency, error rates, fallback frequency). Define thresholds that trigger reassessment: a 5% drop in model accuracy, a statistically significant shift in demographic parity ratios, or the identification of a new adversarial technique in your threat landscape.

Schedule formal reassessments at least annually for all AI systems and prior to any deployment of new AI functionality. The ISO 42001 management system approach builds this cadence into the PDCA cycle, ensuring that risk assessments are maintained as living documents rather than point-in-time snapshots.

Common Mistakes That Undermine AI Risk Assessments

Assessing the model in isolation from its deployment context. A model’s risk profile changes based on who uses it, what decisions it informs, and what populations it affects. A sentiment analysis model used for market research carries different risks than the same model used to evaluate employee performance. Always assess the system-in-context, not the algorithm in the abstract.

Treating the assessment as a one-time compliance exercise. AI systems change. Data drifts. Regulations evolve. An assessment completed at deployment and never revisited creates a false sense of security. Build reassessment triggers into your governance process.

Excluding non-technical stakeholders from the process. Data scientists understand model performance. Legal teams understand regulatory exposure. Business owners understand operational consequences. Affected communities understand lived impact. An AI risk assessment conducted only by the engineering team will miss risks that are obvious to everyone else in the room.

Confusing model performance metrics with risk metrics. High accuracy does not mean low risk. A model that is 99% accurate overall but systematically fails for a specific demographic subgroup has a significant fairness risk that aggregate performance metrics will not surface. Always disaggregate performance analysis by relevant population segments.

How ISO/IEC 42001 Structures AI Risk Management

ISO 42001 provides the management system wrapper that transforms ad hoc risk assessments into a sustainable governance program. Clause 6.1 requires organizations to identify risks and opportunities during planning. Clauses 8.2 and 8.3 mandate systematic risk assessment and treatment processes. Clause 8.4 requires AI impact assessments for high-risk systems. Clauses 9 and 10 establish monitoring, internal audit, and continual improvement requirements.

The standard’s 38 Annex A controls address AI-specific concerns across the full lifecycle: data quality management, model validation, transparency and explainability, bias detection, human oversight, third-party AI supplier management, and incident response. Organizations that have implemented ISO 27001 for information security will find significant structural overlap, as both standards follow the ISO management system architecture. The NIST AI RMF/ISO 42001 crosswalk document maps the relationships between the two frameworks, making it straightforward to implement both in parallel.

For US companies, ISO 42001 certification provides three distinct advantages. It creates a governance backbone that satisfies both EU AI Act requirements and emerging US state regulations. It signals credible commitment to responsible AI in vendor due diligence and enterprise procurement. And it establishes the documentation, audit, and improvement practices that regulators increasingly expect, even where certification itself is not mandated.

Building a Risk-Aware AI Program

AI risk assessment is not a bureaucratic obstacle. It is the process that tells you whether an AI system is safe to deploy, what could go wrong, and what you need to do about it. Organizations that embed risk assessment into their AI development lifecycle catch problems when they are cheap to fix, not after they become headlines, lawsuits, or regulatory enforcement actions.

The six-step process outlined here, building an inventory, identifying risks, scoring and prioritizing, implementing treatments, conducting impact assessments, and monitoring continuously, provides a repeatable framework that scales with your AI portfolio. Anchoring this process in established frameworks like NIST AI RMF and ISO 42001 ensures it remains credible, auditable, and aligned with the regulatory direction in both the US and internationally.

GAICC’s ISO/IEC 42001 training programs give compliance professionals, AI governance teams, and risk managers the practical skills to build and maintain AI risk assessment programs that meet international standards. Explore GAICC’s ISO/IEC 42001 certification courses to start building your AI risk management capability today.