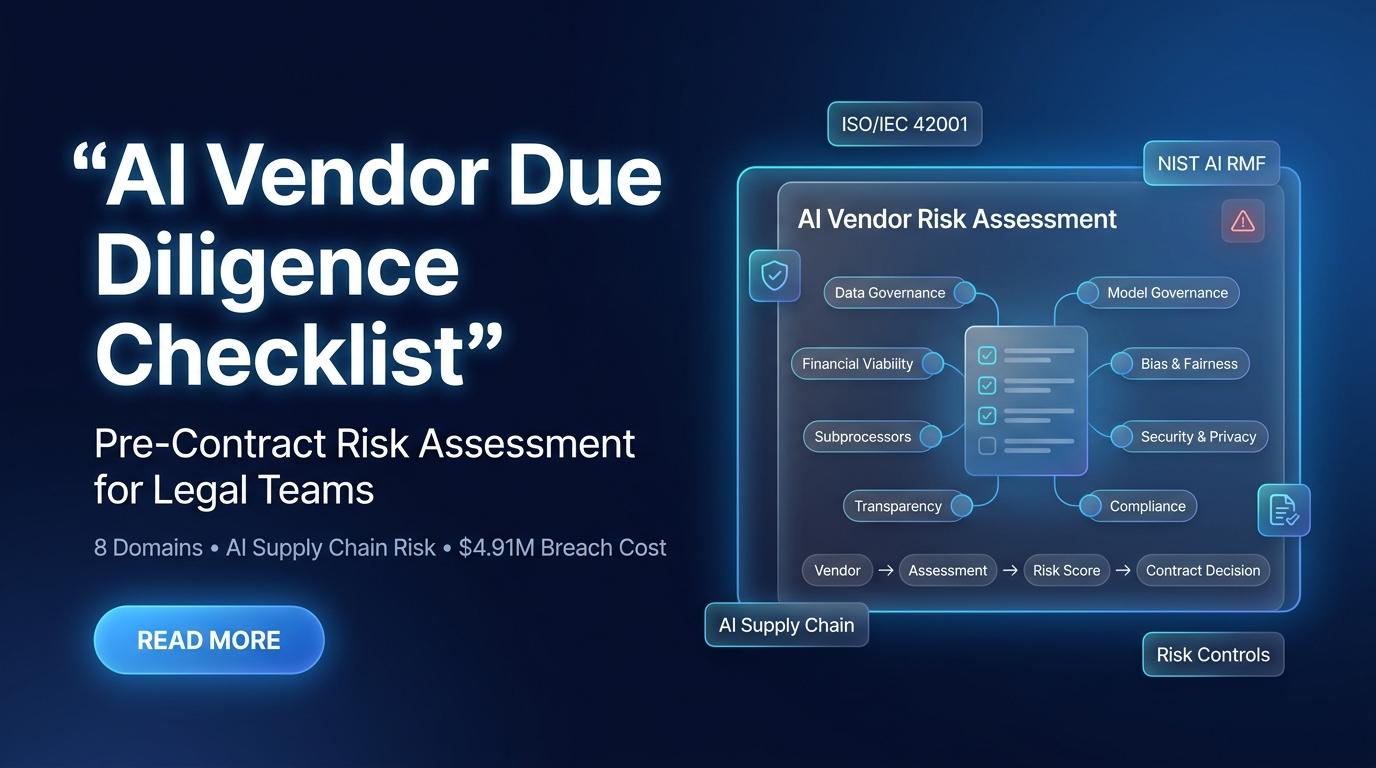

97% of AI-breached organizations lacked access controls. Supply chain attacks cost $4.91M average. Traditional assessment misses model-specific risks. Here is the 8-domain checklist legal teams need before signing.

The AI vendor risk gap: 13% of organizations reported AI breaches (IBM 2025). 97% of those lacked proper AI access controls. Supply chain compromise: $4.91M average cost. 47% of affected individuals from third-party attacks. Traditional questionnaires miss training data provenance, model behavior, bias, fourth-party dependencies, and AI-specific compliance.

Traditional vendor risk assessment covers financial stability, uptime, and basic security. AI vendors add layers those questionnaires miss: training data provenance, model behavior under edge cases, algorithmic bias, output reliability, fourth-party AI dependencies, and AI-specific regulatory compliance. The regulatory shift from “intent” to “evidence” means regulators demand proof of pre-deployment assessment. This checklist provides the structured framework.

Why Traditional Assessment Fails for AI

Non-deterministic behavior. Demo performance ≠ production performance. Failures happen at edge cases and scale, not in curated tests.

Training data creates upstream liability. Vendor’s data practices = customer’s legal exposure. FTC disgorgement cascades to customers.

Invisible fourth-party dependencies. Foundation model reliance rarely disclosed. Vendor may claim ISO 27001 while using uncertified AI sub-processor.

New, evolving compliance. Colorado, Illinois, NYC, EU AI Act, DPDPA create obligations most vendors haven’t addressed.

Unpredictable costs. Token usage and API costs manageable in pilot, unpredictable at scale.

Step One: Tier Your Vendors

| Tier | Criteria | Assessment Depth | Examples |

|---|---|---|---|

| Tier 1: Critical | Consequential decisions. Sensitive data. Customer-facing. Regulated sector. | Full 8-domain. Deep-dive sessions. Third-party verification. Annual. | Hiring AI, credit scoring, medical diagnostics, chatbots with PII, fraud detection. |

| Tier 2: Significant | Internal tools, moderate exposure. Influences operations, not individual decisions. | 6-domain. Questionnaire-based. Biennial. | Internal analytics, doc summarization, project management AI, content generation. |

| Tier 3: Commodity | Low-risk internal. Non-consequential. No regulated data. | 3-domain abbreviated. Self-certification. Spot checks. | Spell-check, spam filtering, meeting transcription, productivity tools. |

The 8-Domain Checklist

Domain 1: Corporate and Financial Viability

Standard assessment plus AI-specific: AI liability insurance, leadership governance expertise, business continuity for model availability, runway/stability if major customer exits.

Domain 2: Data Governance and Training Data Provenance

Highest-risk domain. Where disgorgement risk originates. Training data collection consent, copyrighted material licenses, data isolation, retention/deletion, default training on customer data.

Domain 3: Model Governance and Technical Quality

Beyond demo performance: model cards, edge case testing, versioning, drift monitoring, hallucination rates.

Domain 4: Bias and Fairness Assessment

Mandatory for Tier 1 in employment, credit, housing. Methodology, protected categories, fairness metrics, audit results, remediation.

Domain 5: Security and Privacy

SOC 2/ISO 27001 plus AI-specific: prompt injection, model extraction, data poisoning, adversarial detection, AI incident response, model weight encryption.

Domain 6: Regulatory Compliance Posture

Does the vendor’s compliance cover YOUR use case regulations: Colorado, Illinois, NYC, EU AI Act, DPDPA, CFPB, EEOC, sector-specific (HIPAA, GLBA, FDA).

Domain 7: Transparency and Explainability

Can the system explain decisions (CFPB, EEOC)? Provide disclosure documentation (CA SB 942, EU AI Act Art. 13)? Audit logs for regulatory review? Support human-in-the-loop?

Domain 8: Subprocessor and Fourth-Party Risk

Map the AI supply chain: foundation models used, subprocessors, nth-party risk, certification coverage, contingency for model unavailability.

Operationalizing: Five Steps

- Centralize AI procurement intake. Single entry point. No contract signed without completed assessment.

- Tier vendors on intake. Classify as Tier 1/2/3. Determines assessment depth.

- Distribute structured questionnaire. Domain-specific questions with deadline (15 days Tier 1, 10 days Tier 2). Require evidence, not assertions.

- Validate independently. Don’t accept self-certification for Tier 1. Request SOC 2, ISO 42001, bias audit reports. Technical deep-dive for critical AI.

- Document for the audit trail. Completed assessment = defensible record of pre-deployment evaluation. Centralized repository. Regulators demand evidence of assessment before deployment.

Due diligence → Contract clauses: Assessment determines vendor risk. Contract clauses allocate that risk. The findings from this 8-domain checklist directly inform which of the 12 AI contract clauses need strongest negotiation for each vendor. Due diligence happens before the contract. Clauses codify what you learned.

Assess Before You Sign. Document Before You Deploy.

The shift from “intent” to “evidence” means proving pre-deployment assessment, not post-breach policies. This 8-domain checklist converts vendor promises into verifiable claims, creates the audit trail regulators demand, and informs the contract clauses that allocate identified risk.

The practical first step: take your next AI vendor evaluation and run it through all eight domains at the appropriate tier depth. The gaps between what you currently assess and what this checklist covers are the unexamined risks your organization is carrying.

GAICC offers ISO/IEC 42001 Lead Implementer training that covers the AI governance framework referenced in Domain 6 of this checklist. Understanding ISO 42001 enables legal teams to evaluate vendor governance maturity, interpret vendor compliance documentation, and specify ISO 42001 alignment as a procurement requirement. Explore the program to strengthen your due diligence capability.